Calibrated Probability Assessment

Calibrated probability assessments are subjective assessments of probabilities or confidence intervals that have come from individuals who have been trained specifically to minimize certain biases in probability assessment and whose skill in such assessments has been measured. Although subjective probabilities are relied upon for estimates of key variables in major business decisions, research shows that the subject matter experts who provide these estimates are consistently overconfident. Fortunately, it can be shown that training significantly improves the ability of experts to quantify their own uncertainty about these estimated values.[1]

When a calibrated person says they are "80% confident" in each of 100 predictions they made, they will get about 80% of them correct. Likewise, they will be right 90% of the time they say they are 90% certain, and so on. Calibration training improves subjective probabilities because most people are either "overconfident" or "under-confident" (usually the former). By practicing with a series of trivia questions, it is possible for subjects to fine-tune their ability to assess probabilities. For example, a subject may be asked:

True or False: "A hockey puck fits in a golf hole"

Confidence: Choose the probability that best represents your chance of getting this question right...

50% 60% 70% 80% 90% 100%

If a person has no idea whatsoever, they will say they are only 50% confident. If they are absolutely certain they are correct, they will say 100%. But most people will answer somewhere in between. If a calibrated person is asked a large number of such questions, they will get about as many correct as they expected. An uncalibrated person who is systematically overconfident may say they are 90% confident in a large number of questions where they only get 70% of them correct. On the other hand, an uncalibrated person who is systematically underconfident may say they are 50% confident in a large number of questions where they actually get 70% of them correct. Alternatively, the trainee will be asked to provide a numeric range for a question like, "In what year did Napoleon invade Russia?", with the instruction that the provided range is to represent a 90% confidence interval. That is, the test-taker should be 90% confident that the range contains the correct answer. Calibration training generally involves taking a battery of such tests. Feedback is provided between tests and the subjects refine their probabilities. Calibration training may also involve learning other techniques that help to compensate for consistent over- or under-confidence. Since subjects are better at placing odds when they pretend to bet money, subjects are taught how to convert calibration questions into a type of betting game which is shown to improve their subjective probabilities. Various collaborative methods have been developed, such as prediction market, so that subjective estimates from multiple individuals can be taken into account.[2]

A major problem with expert estimates is overconfidence. To overcome this, Hubbard advocates using caliberated probability assessments to quantify analysts' abilities to make estimates. Caliberation assessments involve getting analysts to answer trivia questions and eliciting confidence intervals for each answer. The confidence intervals are then checked against the proportion of correct answers. Essentially, this assesses experts' abilities to estimate by tracking how often they are right. It has been found that people can improve their ability to make subjective estimates through caliberation training - i.e. repeated caliberation testing followed by feedback.[3]

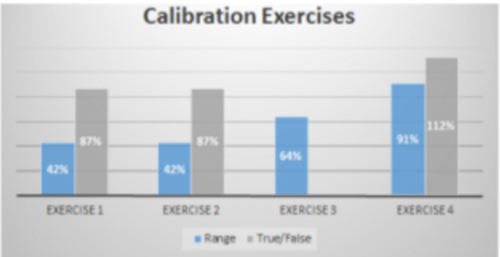

A subjective probability assessment calibration training session revealed the following results (figure below):

source:Health Guard Security

So “what do these numbers mean?” These numbers essentially mean that collectively, and for the most part individually, the group tested is capable of providing fairly accurate subjective probability assessments (the group showed marked improvement between the first and the last exercises). This is important because humans are generally very bad at estimating probabilities, especially when there are multiple variables or factors involved. There are a number of reasons for this shortcoming including tendencies called cognitive biases, which can impact judgement and decision making (sometimes catastrophically). Being “calibrated” is a valuable skill when performing any type of quantitative analysis, especially risk analysis, as risk by its very definition involves uncertainty and estimating/calculating probabilities. Many professionals struggle when trying to describe cyber risk to a business person in qualitative terms (“high/medium/low” or “critical / non-critical”), or in pseudo-quantitative (this risk is a “5” and this one is a “10”). It isn’t very effective and can lead to misinformed decision making. Many industries are either looking at or moving toward quantitative analysis. The World Economic Forum actually proclaimed that the world needs to move to do a better job of quantitatively analyzing/measuring cyber risk. [4]

See Also

References

- ↑ Definition of Calibrated Probability Assessment Hubbard Ressearch

- ↑ What is Calibrated Probability Assessment? Wikipedia

- ↑ Assessing Expert Abilities through Caliberated Training Jeran Binning

- ↑ Understanding and Interpreting Calibration Training Results Health Guard Security