Data Monitoring

What is Data Monitoring?[1]

Data monitoring is the process of reviewing the information entered into the database for accuracy and completeness. The data can also be evaluated to ensure the entered information will accomplish the goals of the registry or clinical study. Data monitoring is performed proactively, either continuously or on a set schedule. The goal of data monitoring is to ensure high-quality data. Data monitoring can catch data that are inconsistent with other participants and therefore may need verification. It can also detect trends or patterns that differ from information collected in the past. Interventional clinical trials are most often monitored by a Data Monitoring Committee (DMC).

The Need for Data Monitoring[2]

Data monitoring allows an organization to proactively maintain a high, consistent standard of data quality. By checking data routinely as it is stored within applications, organizations can avoid the resource-intensive pre-processing of data before it is moved. With data monitoring, data is quality checked at creation time rather than before a move.

Attributes of Data Monitoring[3]

Simply put, monitoring data is the act of having procedures, technologies, and benchmarks in place for tracking the quality and usefulness of data.

The first step to monitoring data is establishing data quality metrics or criteria that are tied to specific business objectives. After establishing the groundwork, you will compare the results over time, allowing for improvement and a deeper understanding of how your data can best be used.

Some critical attributes of data quality that are frequently monitored by organizations include:

- Completeness

- Uniformity

- Accuracy

- Uniqueness

Data quality monitoring helps you reveal problem areas where the most inaccuracies are observed, track unusual or abnormal behaviors, and identify where you should focus your data quality initiatives.

The Importance of Data Monitoring[4]

Data monitoring is important because it helps preserve data integrity and usefulness. By ensuring high-quality data, a business can avoid multiplying ongoing problems with future data interpretation and analysis. Examples of potential problems with data include duplicated or missing data points, vague or indistinguishable data, inconsistent data, and data drift.

Data monitoring can help a business determine the source of data problems and identify solutions. Depending on how the organization uses its data, monitoring can help assure regulatory compliance, reduce costs, or increase profitability, so regular monitoring and correcting data deficiencies is essential.

Elements of an Automated Data Monitoring System[5]

Given the importance of data, data monitoring capabilities in companies are generally below expectations. While large tech companies like Netflix and Uber have built sophisticated data-monitoring platforms and processes, many small and even large companies still can’t easily monitor their data. Enterprises often rely on their legacy data quality software that doesn’t map to their current data stack. Many startups scrape by with a combination of homegrown scripts and tools like Grafana and Prometheus.

In response to this data monitoring gap, there is an emergent class of companies building data monitoring tools.

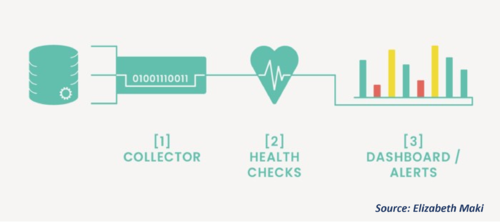

While there are differences in these tools’ approaches, they typically consist of 3 core parts:

(1) a data collector that connects with the user’s data store,

(2) specific health checks (such as volume, freshness, etc) that run on the connected data, and

(3) a dashboard/alerting system to let users observe and act on the overall health of their data.

Methods of Data Monitoring[6]

- Speech Analytics: This technique uses artificial intelligence (AI) to monitor calls in real-time to identify tone and sentiment, gauge customer emotion and satisfaction, and even use algorithms to study the skills of agents.

- Text (Interaction) Analytics: Contact centers using chat, email, or social media to interact with customers have many data quality monitoring tools at their disposal. These can scan the text to identify and analyze data. Note: interaction analytics may include speech analytics as well.

- Predictive Analytics: This approach analyzes previous data to identify and predict customer behavior, and finds the most effective method of interaction.

- Performance Analytics: Using custom dashboards, managers can keep track of agent performance and coaching programs for individual agents.

Data Monitoring Techniques[7]

- Data-Driven Approach: this is when the process of analyzing the data and identifying areas that need improvement is done manually by human beings. This approach is time-consuming and it has high costs as well.

- Data Analytics: This approach collects, analyzes, and reports on the performance of a business in real-time. This approach has low cost but it can only be used for certain types of businesses such as manufacturing or retail companies.

- Metrics-Based Approach: This approach uses metrics for assessing the performance of a business without having to do any manual analysis or reporting on the data. This approach is efficient and inexpensive.

Effective Data Monitoring[8]

For a data monitoring system to be useful, it must be:

- Granular: it must indicate specifically where an issue is occurring, and with what code.

- Persistent: you must monitor things in a time series, otherwise you can’t understand where data sets or errors began (lineage).

- Automatic: the more freedom you have to set thresholds and use machine learning and anomaly detection, the less active attention it requires.

- Ubiquitous: you can’t measure just one part of the pipeline.

- Timely: because what good are late alerts?

Data Monitoring Tool Selection Criteria[9]

The bigger the server, the more CPU and memory are needed to process the data. Using a database monitoring tool is the only reliable way to monitor databases. Similarly, the widespread use of SQL Servers has made monitoring SQL Servers a top priority for monitoring SQL server performance. Below is a methodology for analyzing and selecting a database monitoring tool based on the following criteria:

- The ability to attach to database instances from different DBMSs

- SQL query troubleshooting and optimization

- Database resource and server resource monitoring

- Alerts for resource shortages and performance deterioration

- An easy-to-use interface

- Secure access procedures that include authentication and multiple user accounts

- A free trial, demo, or money-back period for a no-risk assessment

- A reasonable price that reflects the quality of the product and offers value for money

Data Monitoring Tools and Software

Below is a list of some of the Data Monitoring Tools available in the market today:

- SolarWinds Database Performance Analyzer for SQL Server: Includes real-time performance monitoring plus analysis functions. Runs on Windows Server.

- Datadog Database Monitoring: A cloud-based application monitoring service that includes database performance checks.

- ManageEngine Applications Manager: Includes monitoring screens for both SQL-based and NoSQL databases. Runs on Windows Server and Linux.

- SolarWinds AppOptics APM: A comprehensive cloud-based application performance monitor that includes specialized processes for monitoring databases

- Site24x7 Server Monitoring: An online monitoring package that includes SQL monitoring and analysis functions.

- Paessler PRTG Network Monitor: Database monitoring functions are part of this all-in-one network, server, and applications monitoring. Runs on Windows Server.

- SentryOne SQL Sentry: Live database performance monitoring with automated index defragmentation.

- Atera: A remote management solution for managed service providers that includes database backup automation and supervision.

- dbWatch Database Control: A database-focused tool that unifies monitoring for all databases in an enterprise operated by SQL Server, Oracle, Sybase, MariaDB, MySQL, and Postgres.

- Idera SQL Diagnostic Manager: A specialist database monitor for MySQL or SQL Server.

- AimBetter: This SaaS system remotely monitors database performance and includes the services of database experts for SQL Server, Oracle, and SAP.

SQL Power Tools: Logs database performance metrics and scans for anomalous behavior to detect any intrusions.

- Red-Gate SQL Monitor: Real-time database monitor with color-coded statuses and some great data visualizations.

- Lepide SQL Server Auditing: A database monitor that is prized for its cybersecurity features.

- ManageEngine Free SQL Health Monitor: A competent free database performance monitor from a leading infrastructure management producer.

- Spiceworks SQL Server Monitoring: Free, ad-supported database performance monitor.

Data Monitoring Committee[10]

A Data Monitoring Committee (DMC) is an independent group of experts that conduct a periodic review of accumulated interim data during a clinical trial. DMCs are strenuously recommended for certain clinical trials by both US FDA and EU EMA guidelines.

Data monitoring committees (DMCs) work closely with investigators and sponsors to monitor trial conduct and safety, assess risks and benefits, and make recommendations to protect the participants of clinical trials.

The DMC will typically operate under a detailed charter outlining what data the committee will review, how members are selected and vetted for conflicts, and other operational considerations governing how the committee will be run, including the format for reporting and providing recommendations back to the sponsor.

Benefits of Data Monitoring[11]

- A holistic view of the data ecosystem is created and maintained, providing increased visibility.

- Agility and speed are increased across an organization because data can be used immediately.

- Areas, where inaccuracies are most commonly found, can be identified and monitored to find the causes of the issues.

- Challenges associated with the propagation of erroneous or inconsistent data are eliminated by checking data at the time of its creation.

- Closely monitored data enables completeness, consistency, and accuracy.

- Connections can be established between data from disparate sources across an organization.

- Data can more easily be standardized.

- Established data quality metrics can be tracked and reported on to provide insights into adherence to standards and goals.

- New data quality parameters can be added to data monitoring processes to keep pace with changing priorities or concerns within an organization.

- Proactive checking of data against rules helps maintain high-quality, consistent data.

- Time and money are saved by eliminating manual data checks.

- Time spent preparing data for use is minimized, because its data monitoring facilitates ongoing data quality.

See Also

References

- ↑ What is Data Monitoring?

- ↑ Why perform data monitoring?

- ↑ What is data monitoring software?

- ↑ Why is data monitoring important?

- ↑ Elements of an Automated Data Monitoring System

- ↑ What are the different methods of data monitoring?

- ↑ What are the Available Data Quality Monitoring Techniques?

- ↑ Qualities of an effective data monitoring system

- ↑ Methodology for selecting a database monitoring tool

- ↑ What Is an Independent Data Monitoring Committee (DMC)?

- ↑ Benefits of Data Monitoring