Data Mining

What is Data Mining?

Data mining is a process used by companies to turn raw data into useful information. By using software to look for patterns in large batches of data, businesses can learn more about their customers to develop more effective marketing strategies, increase sales and decrease costs. Data mining depends on effective data collection, warehousing, and computer processing.[1]

In simple words, data mining is defined as a process used to extract usable data from a larger set of raw data. It implies analyzing data patterns in large batches of data using one or more software. Data mining has applications in multiple fields, like science and research. As an application of data mining, businesses can learn more about their customers and develop more effective strategies related to various business functions and in turn leverage resources in a more optimal and insightful manner. This helps businesses be closer to their objective and make better decisions. Data mining involves effective data collection and warehousing as well as computer processing. For segmenting the data and evaluating the probability of future events, data mining uses sophisticated mathematical algorithms. Data mining is also known as Knowledge Discovery in Data (KDD).[2]

Historical Development of Data Mining[3]

In the 1960s, statisticians and economists used terms like data fishing or data dredging to refer to what they considered the bad practice of analyzing data without an apriori hypothesis. The term "data mining" was used in a similarly critical way by economist Michael Lovell in an article published in the Review of Economic Studies in 1983. Lovell indicates that the practice "masquerades under a variety of aliases, ranging from "experimentation" (positive) to "fishing" or "snooping" (negative). The term data mining appeared around 1990 in the database community, generally with positive connotations. For a short time in the 1980s, a phrase "database mining"™, was used, but since it was trademarked by HNC, a San Diego-based company, to pitch their Database Mining Workstation; researchers consequently turned to data mining. Other terms used include data archaeology, information harvesting, information discovery, knowledge extraction, etc. Gregory Piatetsky-Shapiro coined the term "knowledge discovery in databases" for the first workshop on the same topic (KDD-1989) and this term became more popular in AI and machine learning community. However, the term data mining became more popular in the business and press communities. Currently, the terms data mining and knowledge discovery are used interchangeably. In the academic community, the major forums for research started in 1995 when the First International Conference on Data Mining and Knowledge Discovery (KDD-95) was started in Montreal under AAAI sponsorship. It was co-chaired by Usama Fayyad and Ramasamy Uthurusamy. A year later, in 1996, Usama Fayyad launched the journal by Kluwer called Data Mining and Knowledge Discovery as its founding editor-in-chief. Later he started the SIGKDDD Newsletter SIGKDD Explorations. The KDD International conference became the primary highest-quality conference in data mining with an acceptance rate of research paper submissions below 18%. The journal Data Mining and Knowledge Discovery is the primary research journal in the field. The manual extraction of patterns from data has occurred for centuries. Early methods of identifying patterns in data include Bayes' theorem (the 1700s) and regression analysis (1800s). The proliferation, ubiquity and increasing power of computer technology have dramatically increased data collection, storage, and manipulation ability. As data sets have grown in size and complexity, direct "hands-on" data analysis has increasingly been augmented with indirect, automated data processing, aided by other discoveries in computer science, such as neural networks, cluster analysis, genetic algorithms (the 1950s), decision trees and decision rules (1960s), and support vector machines (1990s). Data mining is the process of applying these methods with the intention of uncovering hidden patterns in large data sets. It bridges the gap from applied statistics and artificial intelligence (which usually provide the mathematical background) to database management by exploiting the way data is stored and indexed in databases to execute the actual learning and discovery algorithms more efficiently, allowing such methods to be applied to ever larger data sets.

The Importance of Data Mining[4]

The volume of data produced is doubling every two years. Unstructured data alone makes up 90 percent of the digital universe. But more information does not necessarily mean more knowledge. Data mining allows you to:

- Sift through all the chaotic and repetitive noise in your data.

- Understand what is relevant and then make good use of that information to assess likely outcomes.

- Accelerate the pace of making informed decisions.

Data Mining Tools and Techniques[5]

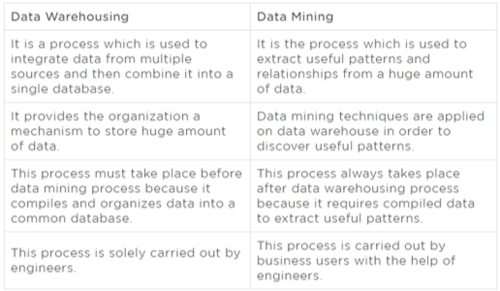

Data mining techniques are used in many research areas, including mathematics, cybernetics, genetics, and marketing. While data mining techniques are a means to drive efficiencies and predict customer behavior, if used correctly, a business can set itself apart from its competition through the use of predictive analysis. Web mining, a type of data mining used in customer relationship management, integrates information gathered by traditional data mining methods and techniques over the web. Web mining aims to understand customer behavior and to evaluate how effective a particular website is. Other data mining techniques include network approaches based on multitask learning for classifying patterns, ensuring parallel and scalable execution of data mining algorithms, mining large databases, handling of relational and complex data types, and machine learning. Machine learning is a type of data mining tool that designs specific algorithms from which to learn and predict.

Data Mining Process[6]

The process of data mining consists of three stages:

(1) the initial exploration,

(2) model building or pattern identification with validation/verification, and

(3) deployment (i.e., the application of the model to new data in order to generate predictions).

- Stage 1: Exploration. This stage usually starts with data preparation which may involve cleaning data, data transformations, selecting subsets of records, and - in the case of data sets with large numbers of variables ("fields") - performing some preliminary feature selection operations to bring the number of variables to a manageable range (depending on the statistical methods which are being considered). Then, depending on the nature of the analytic problem, this first stage of the process of data mining may involve anywhere between a simple choice of straightforward predictors for a regression model, to elaborate exploratory analyses using a wide variety of graphical and statistical methods (see Exploratory Data Analysis (EDA)) in order to identify the most relevant variables and determine the complexity and/or the general nature of models that can be taken into account in the next stage.

- Stage 2: Model building and validation. This stage involves considering various models and choosing the best one based on their predictive performance (i.e., explaining the variability in question and producing stable results across samples). This may sound like a simple operation, but in fact, it sometimes involves a very elaborate process. There are a variety of techniques developed to achieve that goal - many of which are based on the so-called "competitive evaluation of models," that is, applying different models to the same data set and then comparing their performance to choose the best. These techniques - which are often considered the core of predictive data mining - include Bagging (Voting, Averaging), Boosting, Stacking (Stacked Generalizations), and Meta-Learning.

- Stage 3: Deployment. That final stage involves using the model selected as best in the previous stage and applying it to new data in order to generate predictions or estimates of the expected outcome. The concept of Data Mining is becoming increasingly popular as a business information management tool where it is expected to reveal knowledge structures that can guide decisions in conditions of limited certainty. Recently, there has been increased interest in developing new analytic techniques specifically designed to address the issues relevant to business Data Mining (e.g., Classification Trees), but Data Mining is still based on the conceptual principles of statistics including the traditional Exploratory Data Analysis (EDA) and modeling and it shares with them both some components of its general approaches and specific techniques. However, an important general difference in the focus and purpose between Data Mining and traditional |Exploratory Data Analysis (EDA) is that Data Mining is more oriented toward applications than the basic nature of the underlying phenomena. In other words, Data Mining is relatively less concerned with identifying the specific relations between the involved variables. For example, uncovering the nature of the underlying functions or the specific types of interactive, multivariate dependencies between variables is not the main goal of Data Mining. Instead, the focus is on producing a solution that can generate useful predictions. Therefore, Data Mining accepts among others a "black box" approach to data exploration or knowledge discovery and uses not only the traditional Exploratory Data Analysis (EDA) techniques but also such techniques as Neural Networks which can generate valid predictions but are not capable of identifying the specific nature of the interrelations between the variables on which the predictions are based.

Data Mining Methods[7]

Data mining is used to simplify and summarize the data in a manner that we can understand, and then allow us to infer things about specific cases based on the patterns we have observed. Of course, specific applications of data mining methods are limited by the data and computing power available and are tailored for specific needs and goals. However, there are several main types of pattern detection that are commonly used. These general forms illustrate what data mining can do.

- Anomaly detection: in a large data set it is possible to get a picture of what the data tends to look like in a typical case. Statistics can be used to determine if something is notably different from this pattern. For instance, the IRS could model typical tax returns and use anomaly detection to identify specific returns that differ from this for review and audit.

- Association learning: This is the type of data mining that drives the Amazon recommendation system. For instance, this might reveal that customers who bought a cocktail shaker and a cocktail recipe book also often buy martini glasses. These types of findings are often used for targeting coupons/deals or advertising. Similarly, this form of data mining (albeit a quite complex version) is behind Netflix movie recommendations.

- Cluster detection: one type of pattern recognition that is particularly useful is recognizing distinct clusters or sub-categories within the data. Without data mining, an analyst would have to look at the data and decide on a set of categories that they believe captures the relevant distinctions between apparent groups in the data. This would risk missing important categories. With data mining, it is possible to let the data itself determine the groups. This is one of the black-box types of algorithms that are hard to understand. But in a simple example - again with purchasing behavior - we can imagine that the purchasing habits of different hobbyists would look quite different from each other: gardeners, fishermen, and model airplane enthusiasts would all be quite distinct. Machine learning algorithms can detect all of the different subgroups within a dataset that differ significantly from each other.

- Classification: If an existing structure is already known, data mining can be used to classify new cases into these pre-determined categories. Learning from a large set of pre-classified examples, algorithms can detect persistent systemic differences between items in each group and apply these rules to new classification problems. Spam filters are a great example of this - large sets of emails that have been identified as spam have enabled filters to notice differences in word usage between legitimate and spam messages, and classify incoming messages according to these rules with a high degree of accuracy.

- Regression: Data mining can be used to construct predictive models based on many variables. Facebook, for example, might be interested in predicting future engagement for a user based on past behavior. Factors like the amount of personal information shared, number of photos tagged, friend requests initiated or accepted, comments, likes, etc. could all be included in such a model. Over time, this model could be honed to include or weigh things differently as Facebook compares how the predictions differ from observed behavior. Ultimately these findings could be used to guide design in order to encourage more of the behaviors that seem to lead to increased engagement over time. The patterns detected and structures revealed by descriptive data mining are then often applied to predict other aspects of the data. Amazon offers a useful example of how descriptive findings are used for prediction. The (hypothetical) association between cocktail shaker and martini glass purchases, for instance, could be used, along with many other similar associations, as part of a model predicting the likelihood that a particular user will make a particular purchase. This model could match all such associations with a user's purchasing history, and predict which products they are most likely to purchase. Amazon can then serve ads based on what that user is most likely to buy.

Data Mining and OLAP[8]

OLAP can be defined as the fast analysis of shared multidimensional data. OLAP and data mining are different but complementary activities. OLAP supports activities such as data summarization, cost allocation, time series analysis, and what-if analysis. However, most OLAP systems do not have inductive inference capabilities beyond the support for time-series forecasts. Inductive inference, the process of reaching a general conclusion from specific examples, is a characteristic of data mining. Inductive inference is also known as computational learning. OLAP systems provide a multidimensional view of the data, including full support for hierarchies. This view of the data is a natural way to analyze businesses and organizations. Data mining, on the other hand, usually does not have a concept of dimensions and hierarchies. Data mining and OLAP can be integrated into a number of ways. For example, data mining can be used to select the dimensions for a cube, create new values for a dimension, or create new measures for a cube. OLAP can be used to analyze data mining results at different levels of granularity. Data Mining can help you construct more interesting and useful cubes. For example, the results of predictive data mining could be added as custom measures to a cube. Such measures might provide information such as "likely to default" or "likely to buy" for each customer. OLAP processing could then aggregate and summarize the probabilities.

Data Mining Vs. Data Warehousing[9]

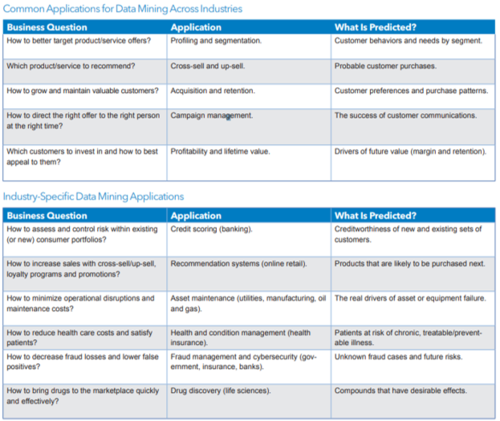

Data Warehousing Vs. Data Mining Comparision Table

source: EduCBA

Challenges in Data Mining[10]

Though data mining is very powerful, it faces many challenges during its implementation. The challenges could be related to performance, data, methods, techniques used, etc. The data mining process becomes successful when the challenges or issues are identified correctly and sorted out properly.

- Noisy and Incomplete Data: Data mining is the process of extracting information from large volumes of data. The real-world data is heterogeneous, incomplete, and noisy. Data in large quantities normally will be inaccurate or unreliable. These problems could be due to errors in the instruments that measure the data or because of human errors. Suppose a retail chain collects the email id of customers who spend more than $200 and the billing staff enters the details into their system. The person might make spelling mistakes while entering the email id which results in incorrect data. Even some customers might not be ready to disclose their email id which results in incomplete data. The data even could get altered due to system or human errors. All these results in noisy and incomplete data which makes data mining really challenging.

- Distributed Data: Real-world data is usually stored on different platforms in distributed computing environments. It could be in databases, individual systems, or even on the Internet. It is practically very difficult to bring all the data to a centralized data repository mainly due to organizational and technical reasons. For example, different regional offices might be having their own servers to store their data whereas it will not be feasible to store all the data (millions of terabytes) from all the offices in a central server. So, data mining demands the development of tools and algorithms that enable the mining of distributed data.

- Complex Data: Real-world data is really heterogeneous and it could be multimedia data including images, audio and video, complex data, temporal data, spatial data, time series, natural language text, and so on. It is really difficult to handle these different kinds of data and extract the required information. Most of the time, new tools and methodologies would have to be developed to extract relevant information.

- Performance: The performance of the data mining system mainly depends on the efficiency of the algorithms and techniques used. If the algorithms and techniques designed are not up to the mark, then it will affect the performance of the data mining process adversely.

- Incorporation of Background Knowledge: If background knowledge can be incorporated, more reliable and accurate data mining solutions can be found. Descriptive tasks can come up with more useful findings and predictive tasks can make more accurate predictions. But collecting and incorporating background knowledge is a complex process.

- Data Visualization: Data visualization is a very important process in data mining because it is the main process that displays the output in a presentable manner to the user. The information extracted should convey the exact meaning of what it actually intends to convey. But many times, it is really difficult to represent the information in an accurate and easy-to-understand way to the end user. The input data and output information are really complex and very effective, and successful data visualization techniques need to be applied to make them successful.

- Data Privacy and Security: Data mining normally leads to serious issues in terms of data security, privacy, and governance. For example, when a retailer analyzes the purchase details, it reveals information about buying habits and preferences of customers without their permission.

Advantages of Data Mining[11]

- Marketing/Retail: Data mining helps marketing companies build models based on historical data to predict who will respond to new marketing campaigns such as direct mail, online marketing campaign…etc. Through the results, marketers will have an appropriate approach to selling profitable products to targeted customers. Data mining brings a lot of benefits to retail companies in the same way as marketing. Through market basket analysis, a store can have an appropriate production arrangement in a way that customers can buy frequently buying products together with pleasant. In addition, it also helps retail companies offer certain discounts for particular products that will attract more customers.

- Finance/Banking: Data mining gives financial institutions information about loan information and credit reporting. By building a model from historical customer data, the bank, and financial institution can determine good and bad loans. In addition, data mining helps banks detect fraudulent credit card transactions to protect the credit card owner.

- Manufacturing: By applying data mining in operational engineering data, manufacturers can detect faulty equipment and determine optimal control parameters. For example, semiconductor manufacturers have a challenge that even the conditions of manufacturing environments at different wafer production plants are similar, the quality of wafer are a lot the same and some for unknown reasons even has defects. Data mining has been applied to determine the ranges of control parameters that lead to the production of the golden wafer. Then those optimal control parameters are used to manufacture wafers with desired quality.

- Governments: Data mining helps government agencies by digging and analyzing records of financial transactions to build patterns that can detect money laundering or criminal activities.

Trends in Data Mining[12]

Businesses that have been slow in adopting the process of data mining are now catching up with the others. Extracting important information through the process of data mining is widely used to make critical business decisions. In the coming decade, we can expect data mining to become as ubiquitous as some of the more prevalent technologies used today. Some of the key data mining trends for the future include -

- Multimedia Data Mining: This is one of the latest methods which is catching up because of the growing ability to capture useful data accurately. It involves the extraction of data from different kinds of multimedia sources such as audio, text, hypertext, video, images, etc., and the data is converted into a numerical representation in different formats. This method can be used in clustering and classifications, performing similarity checks, and also to identify associations.

- Ubiquitous Data Mining: This method involves the mining of data from mobile devices to get information about individuals. In spite of having several challenges in this type such as complexity, privacy, cost, etc. this method has a lot of opportunities to be enormous in various industries especially in studying human-computer interactions.

- Distributed Data Mining: This type of data mining is gaining popularity as it involves the mining of huge amounts of information stored in different company locations or at different organizations. Highly sophisticated algorithms are used to extract data from different locations and provide proper insights and reports based on them.

- Spatial and Geographic Data Mining: This is a new trending type of data mining that includes extracting information from environmental, astronomical, and geographical data which also includes images taken from outer space. This type of data mining can reveal various aspects such as distance and topology which is mainly used in geographic information systems and other navigation applications.

- Time Series and Sequence Data Mining: The primary application of this type of data mining is the study of cyclical and seasonal trends. This practice is also helpful in analyzing even random events which occur outside the normal series of events. This method is mainly used by retail companies to access customers' buying patterns and behaviors.

See Also

References

- ↑ Defining Data Mining

- ↑ What does Data Mining Mean?

- ↑ Data Mining - Etymology and Background

- ↑ Whys is Data Mining Important?

- ↑ The Tools and Techniques of Data Mining

- ↑ The Process of Data Mining

- ↑ What are the Different Methods of Data Mining?

- ↑ Data Mining and OLAP

- ↑ Data Mining Vs. Data Warehousing

- ↑ Challenges in Data Mining

- ↑ What are the Advantages of Data Mining?

- ↑ 5 Important Future Trends in Data Mining