Dataflow

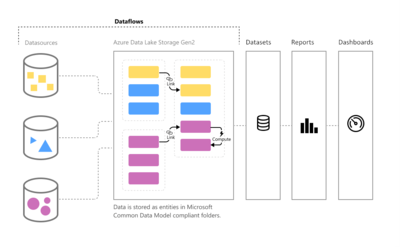

Dataflow is the movement of data through a system comprised of software, hardware or a combination of both. Dataflow is often defined using a model or diagram in which the entire process of data movement is mapped as it passes from one component to the next within a program or a system, taking into consideration how it changes form during the process. Dataflow design is done through specialized software called dataflow diagrams (DFD) which are specially designed to graphically map how data is transmitted throughout a system. The dataflow diagram is important in the architectural design of a system since it defines what kind of data is needed in order to start or complete a specific process. Using a dataflow diagram is part of the dataflow modeling process.[1]

When Dataflows are Used[2]

Dataflows are designed to support the following scenarios:

Create reusable transformation logic that can be shared by many datasets and reports inside Power BI. Dataflows promote reusability of the underlying data elements, preventing the need to create separate connections with your cloud or on-premise data sources.

Expose the data in your own Azure Data Lake Gen 2 storage, enabling you to connect other Azure services to the raw underlying data.

Create a single source of the truth by forcing analysts to connect to the dataflows, rather than connecting to the underlying systems, providing you with control over which data is accessed, and how data is exposed to report creators. You can also map the data to industry standard definitions, enabling you to create tidy curated views, which can work with other services and products in the Power Platform.

If you want to work with large data volumes and perform ETL at scale, dataflows with Power BI Premium scales more efficiently and gives you more flexibility. Dataflows supports a wide range of cloud and on-premise sources.

Prevent analysts from having direct access to the underlying data source. Since report creators can build on top of dataflows, it may be more convenient for you to allow access to underlying data sources only to a few individuals, and then provide access to the dataflows for analysts to build on top of. This approach reduces the load to the underlying systems, and gives administrators finer control of when the systems get loaded from refreshes.

- ↑ Definition - What Does Dataflow Mean? Techopedia

- ↑ When to use dataflows Microsoft