Data Architecture

What is Data Architecture?

Data Architecture is a set of rules, policies, standards, and models that govern and define the type of data collected and how it is used, stored, managed, and integrated within an organization and its database systems. It provides a formal approach to creating and managing the flow of data and how it is processed across an organization’s IT systems and applications.[1] Businesses Use Data Architecture To:

- Strategically prepare organizations to evolve quickly and to take advantage of business opportunities inherent in emerging technologies

- Translate business needs into data and system requirements

- Facilitate alignment of IT and business systems

- Manage complex data and information delivery throughout the enterprise. Acts as agents for change transformation and agility

- Depict the flow of information between people (users) and business processes

Overview of Data Architecture[2]

A data architecture should set data standards for all its data systems as a vision or a model of the eventual interactions between those data systems. Data integration, for example, should be dependent upon data architecture standards since data integration requires data interactions between two or more data systems. A data architecture, in part, describes the data structures used by a business and its computer applications software. Data architectures address data in storage, data in use, and data in motion; descriptions of data stores, data groups, and data items; and mappings of those data artifacts to data qualities, applications, locations, etc.

Essential to realizing the target state, Data Architecture describes how data is processed, stored, and utilized in an information system. It provides criteria for data processing operations so as to make it possible to design data flows and also control the flow of data in the system.

The data architect is typically responsible for defining the target state, aligning during development, and then following up to ensure enhancements are done in the spirit of the original blueprint.

During the definition of the target state, the Data Architecture breaks a subject down to the atomic level and then builds it back up to the desired form. The data architect breaks the subject down by going through 3 traditional architectural processes:

- Conceptual - represents all business entities.

- Logical - represents the logic of how entities are related.

- Physical - the realization of the data mechanisms for a specific type of functionality.

Data architecture should be defined in the planning phase of the design of a new data processing and storage system. The major types and sources of data necessary to support an enterprise should be identified in a manner that is complete, consistent, and understandable. The primary requirement at this stage is to define all of the relevant data entities, not to specify computer hardware items. A data entity is any real or abstracted thing about which an organization or individual wishes to store data.

Components in Data Architecture[3]

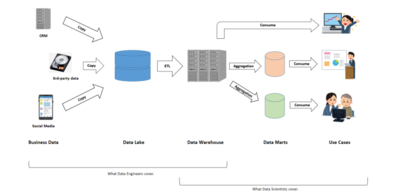

“Data Lake”, “Data Warehouse”, and “Data Mart” are typical components in the architecture of a data platform. In this order, data produced in the business is processed and set to create another data implication.

The three components take responsibility for three different functionalities as such:

- Data Lake: holds an original copy of data produced in the business. Data processing from the original should be minimal if any; otherwise, in case some data processing turned out to be wrong in the end, it will not be possible to fix the error retrospectively.

- Data Warehouse: holds data processed and structured by a managed data model, reflecting the global (not specific) direction of the final use of the data. In many cases, the data is in tabular format.

- Data Mart: holds a subpart and/or aggregated data set for the use of a particular business function, e.g. specific business unit or specific geographical area. A typical example is when we prepare the summary of KPIs for a specific business line followed by visualization in BI tool. Especially, preparing this kind of separate and independent component after the warehouse is worthwhile when the user wants the data mart regularly and frequently updated. On contrary, this portion can be skipped in cases the user only wants some set of data for ad hoc analysis done only once.

Roughly speaking, data engineers cover from data extraction produced in business to the data lake and data model building in the data warehouse as well as establishing the ETL pipeline; while data scientists cover from data extraction out of the data warehouse, building data mart, and to lead to further business application and value creation.

Of course, this role assignment between data engineers and data scientists is somewhat ideal and many companies do not hire both just to fit this definition. Actually, their job descriptions tend to overlap.

Data Architecture Principles

According to Joshua Klahr, vice president of product management, and core products, at Splunk, and former vice president of product management at AtScale, six principles form the foundation of modern data architecture:

- Data is a shared asset. A modern data architecture needs to eliminate departmental data silos and give all stakeholders a complete view of the company.

- Users require adequate access to data. Beyond breaking down silos, modern data architectures need to provide interfaces that make it easy for users to consume data using tools fit for their jobs.

- Security is essential. Modern data architectures must be designed for security and they must support data policies and access controls directly on the raw data.

- Common vocabularies ensure common understanding. Shared data assets, such as product catalogs, fiscal calendar dimensions, and KPI definitions, require a common vocabulary to help avoid disputes during analysis.

- Data should be curated. Invest in core functions that perform data curation (modeling important relationships, cleansing raw data, and curating key dimensions and measures).

- Data flows should be optimized for agility. Reduce the number of times data must be moved to reduce cost, increase data freshness, and optimize enterprise agility.[4]

Data Architecture Frameworks

There are multiple enterprise architecture frameworks that are used as the foundation for building the data architecture framework of an organization.

- DAMA-DMBOK 2: This refers to DAMA International's Data Management Body of Knowledge – a framework designed specifically for data management. It includes standard definitions of data management terminology, functions, deliverables, and roles, and also presents guidelines on data management principles.

- Zachman Framework for Enterprise Architecture: John Zachman created this enterprise ontology at IBM during the 1980s. The 'data' column of this framework includes multiple layers like key architectural standards for the business, a semantic model or conceptual/enterprise data model, an enterprise or logical data model, a physical data model, and actual databases.

- The Open Group Architecture Framework (TOGAF): TOGAF is the most used enterprise architecture methodology that offers a framework for designing, planning, implementing, and managing data architecture best practices. It helps define business goals and align them with architectural objectives.[5]

Characteristics of Effective Data Architecture

Data architecture is “modern” if it's built around certain characteristics:

- User-driven: In the past, data was static and access was limited to a select group of IT experts and data scientists. Decision makers didn't necessarily get what they wanted or needed — only what was available. In modern data architecture, business users can confidently define the requirements, because data architects can pool data into a data lake or data warehouse and create solutions to access it in ways that meet business objectives.

- Built on shared data: Effective enterprise data architecture is built on data structures that encourage collaboration. Good data architecture eliminates silos by combining data from all parts of the organization, along with external sources as needed, into one place to eliminate competing versions of the same data. In this environment, data is not bartered among business units or hoarded, but is seen as a shared, companywide asset.

- Automated: Automation removes the friction that made legacy data systems tedious to configure. Data processing functions that took months to build can now be completed in hours or days using cloud-based tools. If a user wants access to different data, automation enables the architect to quickly design data pipelines to deliver it. As new data is sourced, data architects can quickly integrate it into the architecture.

- Driven by AI: Smart data architecture takes automation to a new level, using machine learning (ML) and artificial intelligence (AI) to adjust, alert, and recommend solutions to new conditions. ML and AI can identify data types, identify and fix data quality errors, create structures for incoming data, identify relationships for fresh insights, and recommend related datasets and analytics.

- Elastic: Elasticity allows companies to scale up or down as needed. Here, the cloud is your best friend, as it allows on-demand scalability quickly and affordably. Elasticity allows administrators and data analysts to focus on troubleshooting and problem-solving rather than on exacting capacity calibration or overbuying hardware to keep up with demand.

- Simple: Simplicity trumps complexity when considering efficient data architecture. Do you need a show dog or a workhorse? Strive for simplicity in data movement, data platforms, data assembly frameworks, and analytic platforms.

- Secure: Security is built into modern data architecture, restricting data access and ensuring that sensitive data is available on a need-to-know basis as defined by the business. Good data architecture also recognizes existing and emerging threats to data security while ensuring regulatory compliance with legislation such as HIPAA and GDPR.[6]

The Risks of Weak Data Architecture?

Rapid technological advances and changes in business needs can undermine effective data architectures. When we add new lines and shapes to a data architecture’s data flow, it becomes very difficult to keep them updated and consistent. This can lead to duplicate data processes, high costs, and increased maintenance time for businesses. Organizations should strive to balance the complexity of data architectures, business needs, and the new tools/platforms for data management by carefully adopting new technologies that align with their vision and goals.[7]

See Also

Data architecture is a critical component of enterprise architecture that outlines how data is collected, stored, transformed, distributed, and consumed within an organization. It includes the principles, guidelines, and standards that govern data flows and management, aiming to align data assets with business strategy to improve efficiency, compliance, and decision-making.

- Data Governance: Discussing the overall management of the availability, usability, integrity, and security of the data employed in an organization. Data governance includes the establishment of policies, procedures, and standards that guide data architecture decisions.

- Database Management System (DBMS): Exploring the types of software systems that are used to store, retrieve, and manage data in databases. This includes relational databases, NoSQL databases, and in-memory databases.

- Data Modeling: Covering the process of creating data models for an information system by applying formal data modeling techniques. Data modeling is a key component of data architecture, helping to define how data is stored, consumed, integrated, and managed.

- Enterprise Architecture (EA): Discussing the conceptual blueprint that defines the structure and operation of an organization. Data architecture is a component of EA, which also includes application architecture, technology architecture, and business architecture.

- Big Data Technologies: Exploring technologies and frameworks designed to handle, process, and analyze large volumes of data that traditional data processing software cannot handle. This includes Hadoop, Spark, and NoSQL databases.

- Cloud Data Services: Discussing the services provided by cloud platforms for data storage, processing, and analytics, such as Amazon Web Services (AWS) S3, Google Cloud Storage, and Azure Data Lake. Cloud data services play an increasingly important role in modern data architectures.

- Data Integration: Covering the practices and technologies involved in preparing and combining data from different sources to provide a unified, consistent view. This includes ETL (extract, transform, load) processes, data replication, and data federation.

- Data Warehouse and Data Lake: Discussing the centralized repositories for storing structured and unstructured data at scale. Data warehouses and data lakes are key components of data architecture for analytics and business intelligence.

- Business Intelligence (BI) and Data Analytics: Exploring the strategies and technologies used by enterprises for data analysis and business information. Data architecture must support BI and analytics applications by providing reliable, timely, and accurate data.

- Data Privacy and Data Security: Highlighting the measures and controls that ensure data privacy and protect data from unauthorized access and data breaches. Data architecture must incorporate security and compliance considerations, especially for sensitive and personal data.

- Master Data Management (MDM): Discussing the process of creating and maintaining a consistent and accurate set of master data across an organization. MDM is a critical component of data architecture for ensuring data integrity and consistency.

- Data Quality Management: Covering the processes and technologies used to measure, manage, and improve the quality of data. High-quality data is crucial for effective decision-making and operational efficiency.