Feedforward Neural Network

What is a Feedforward Neural Network?

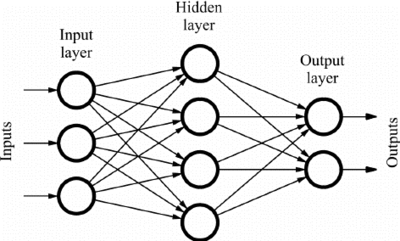

A Feedforward Neural Network is an artificial neural network in which node connections do not form a cycle. It is one of the simplest forms of artificial neural networks. In a feedforward neural network, the information moves in only one direction—forward—from the input nodes, through the hidden nodes (if any), and to the output nodes. The network has no cycles or loops, hence the name "feedforward."

The opposite of a feed-forward neural network is a recurrent neural network, in which certain pathways are cycled. The feed-forward model is the simplest form of neural network, as information is only processed in one direction. While the data may pass through multiple hidden nodes, it always moves in one direction and never backward.

source: Ramon Quiza

Key Components of Feedforward Neural Network

- Input Layer: This layer consists of input neurons that send data to the next layer (the hidden layer or directly to the output layer if no hidden layer exists). Each neuron in the input layer represents a feature of the input data.

- Hidden Layers: These layers are between the input and output layers. Their function is to process the inputs received from the input layer and perform computations using weighted inputs, biases, and activation functions to send an output to the next layer.

- Output Layer: The final layer that produces the output for the network. The design of the output layer depends on the specific requirements of the model, such as classification or regression tasks.

- Weights and Biases: Neurons in one layer connect to neurons in the next layer through links associated with weights. Each neuron also has a bias term. These weights and biases are adjusted during the training process to minimize prediction errors.

- Activation Function: This is a function applied to the output of each neuron, which introduces nonlinear properties to the model. Common activation functions include Sigmoid, Tanh, and ReLU (Rectified Linear Unit).

How Feedforward Neural Networks Work

- Data Feeding: Data is fed into the input layer. Each input is associated with a weight and is summed up within a neuron.

- Applying Activation Function: The sum of the inputs and the neuron's bias is then passed through an activation function, determining the neuron's output.

- Forward Propagation: The output from one layer is passed as input to the next layer, and this continues until the output layer is reached.

- Backpropagation: During training, the error between the predicted output and the actual output is calculated, and this error is propagated back through the network to adjust the weights and biases. This process is repeated (often thousands of times) across all entries in the training dataset.

Applications of Feedforward Neural Networks

- Classification Tasks: Such as digit recognition, image classification, and text categorization.

- Regression Tasks: Such as predicting prices of houses, stock prices, and temperature forecasting.

- Speech Recognition: Converting spoken language into text.

- Customer Research: Analyzing customer data to identify patterns or predict trends.

Advantages of Feedforward Neural Networks

- Simplicity: They are straightforward to implement and understand, making them a popular choice for many introductory applications in neural networks.

- Efficiency: Feedforward neural networks are generally fast and efficient to train, especially when there are no extensive data or complex patterns to learn.

Challenges and Limitations

- Limited Complexity: They cannot capture sequential information in input data like Recurrent Neural Networks (RNNs) or learn features in grid-like data structures as effectively as Convolutional Neural Networks (CNNs).

- Overfitting: Feedforward neural networks might overfit without proper regularization techniques, especially if the network architecture is too complex for the task.

Conclusion

Feedforward Neural Networks are a foundational type of artificial neural network that helps pave the way for understanding more complex networks. They are widely used in various applications ranging from simple to moderately complex tasks, providing a good starting point for exploring the capabilities and limitations of neural networks in artificial intelligence and machine learning domains.

See Also

Feedforward Neural Network is a type of artificial neural network where connections between the nodes do not form a cycle.

- Artificial Neural Network (ANN): Discussing the broader category of networks, including how they simulate human brain operations to process information.

- Deep Learning: Exploring the subset of machine learning based on learning data representations, of which feedforward networks are a foundational architecture.

- Backpropagation: Covering the primary training algorithm for feedforward neural networks, which is essential for understanding how these networks learn.

- Activation Functions: Discussing functions like sigmoid, ReLU, and softmax that help a neural network model complex patterns.

- Supervised Learning: Exploring the type of machine learning task that is often performed using feedforward neural networks, where the system learns from labeled training data.

- Convolutional Neural Network (CNN): Covering a specialized kind of neural network for processing data that has a grid-like topology, such as images.

- Recurrent Neural Network (RNN): Discussing neural networks in which connections between nodes form a directed graph along a temporal sequence, which contrasts with the feedforward architecture.

- Loss Functions: Exploring how networks measure the error between predicted outputs and actual outputs during training.

- Gradient Descent: Discussing the optimization algorithm used to minimize the loss function in neural networks, including feedforward networks.

- Machine Learning Algorithms: Covering a broad overview of algorithms used in machine learning, situating feedforward networks within this context.

These topics will help gain a comprehensive view of feedforward neural networks, including their structure, how they function, and their relationship to broader concepts in artificial intelligence and machine learning.