Computer Vision

Definition - What is Computer Vision? [1]

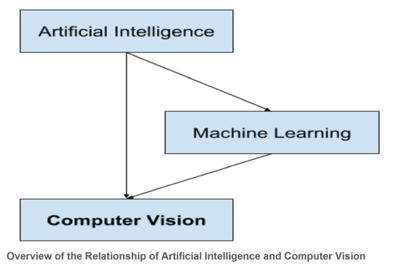

Computer vision (CV) enables computers to see, identify and process images in the same way that human vision does, and then provide appropriate output. It is like imparting human intelligence and instincts to a computer. The computer must interpret what it sees, and then perform appropriate analysis or act accordingly. Computer vision is the subcategory of artificial intelligence (AI) that focuses on building and using digital systems to process, analyze and interpret visual data. The goal of computer vision is to enable computing devices to correctly identify an object or person in a digital image and take appropriate action. Computer vision uses convolutional neural networks (CNNs) to process visual data at the pixel level and deep learning recurrent neural network (RNNs) to understand how one pixel relates to another.

source: Machine Learning Mastery

History of Computer Vision[2]

In the late 1960s, computer vision began at universities which were pioneering artificial intelligence. It was meant to mimic the human visual system, as a stepping stone to endowing robots with intelligent behavior. In 1966, it was believed that this could be achieved through a summer project, by attaching a camera to a computer and having it "describe what it saw".

What distinguished computer vision from the prevalent field of digital image processing at that time was a desire to extract three-dimensional structure from images with the goal of achieving full scene understanding. Studies in the 1970s formed the early foundations for many of the computer vision algorithms that exist today, including extraction of edges from images, labeling of lines, non-polyhedral and polyhedral modeling, representation of objects as interconnections of smaller structures, optical flow, and motion estimation.

The next decade saw studies based on more rigorous mathematical analysis and quantitative aspects of computer vision. These include the concept of scale-space, the inference of shape from various cues such as shading, texture and focus, and contour models known as snakes. Researchers also realized that many of these mathematical concepts could be treated within the same optimization framework as regularization and Markov random fields. By the 1990s, some of the previous research topics became more active than the others. Research in projective 3-D reconstructions led to better understanding of camera calibration. With the advent of optimization methods for camera calibration, it was realized that a lot of the ideas were already explored in bundle adjustment theory from the field of photogrammetry. This led to methods for sparse 3-D reconstructions of scenes from multiple images. Progress was made on the dense stereo correspondence problem and further multi-view stereo techniques. At the same time, variations of graph cut were used to solve image segmentation. This decade also marked the first time statistical learning techniques were used in practice to recognize faces in images (see Eigenface). Toward the end of the 1990s, a significant change came about with the increased interaction between the fields of computer graphics and computer vision. This included image-based rendering, image morphing, view interpolation, panoramic image stitching and early light-field rendering.

Recent work has seen the resurgence of feature-based methods, used in conjunction with machine learning techniques and complex optimization frameworks. The advancement of Deep Learning techniques has brought further life to the field of computer vision. The accuracy of deep learning algorithms on several benchmark computer vision data sets for tasks ranging from classification, segmentation and optical flow has surpassed prior methods.

How Computer Vision Works[3]

Computer vision works in three basic steps:

- Acquiring an image: Images, even large sets, can be acquired in real-time through video, photos or 3D technology for analysis.

- Processing the image: Deep learning models automate much of this process, but the models are often trained by first being fed thousands of labeled or pre-identified images.

- Understanding the image: The final step is the interpretative step, where an object is identified or classified

Computer Vision Examples[4]

Many organizations don’t have the resources to fund computer vision labs and create deep learning models and neural networks. They may also lack the computing power required to process huge sets of visual data. Companies such as IBM are helping by offering computer vision software development services. These services deliver pre-built learning models available from the cloud — and also ease demand on computing resources. Users connect to the services through an application programming interface (API) and use them to develop computer vision applications.

While it’s getting easier to obtain resources to develop computer vision applications, an important question to answer early on is: What exactly will these applications do? Understanding and defining specific computer vision tasks can focus and validate projects and applications and make it easier to get started. Here are a few examples of established computer vision tasks:

- Image classification sees an image and can classify it (a dog, an apple, a person’s face). More precisely, it is able to accurately predict that a given image belongs to a certain class. For example, a social media company might want to use it to automatically identify and segregate objectionable images uploaded by users.

- Object detection can use image classification to identify a certain class of image and then detect and tabulate their appearance in an image or video. Examples include detecting damages on an assembly line or identifying machinery that requires maintenance.

- Object tracking follows or tracks an object once it is detected. This task is often executed with images captured in sequence or real-time video feeds. Autonomous vehicles, for example, need to not only classify and detect objects such as pedestrians, other cars and road infrastructure, they need to track them in motion to avoid collisions and obey traffic laws.

- Content-based image retrieval uses computer vision to browse, search and retrieve images from large data stores, based on the content of the images rather than metadata tags associated with them. This task can incorporate automatic image annotation that replaces manual image tagging. These tasks can be used for digital asset management systems and can increase the accuracy of search and retrieval.

Applications Of Computer Vision[5]

Computer vision is one of the areas in Machine Learning where core concepts are already being integrated into major products that we use every day.

- Computer Vision In Self-Driving Cars: But it’s not just tech companies that are leverage Machine Learning for image applications. Computer vision enables self-driving cars to make sense of their surroundings. Cameras capture video from different angles around the car and feed it to computer vision software, which then processes the images in real-time to find the extremities of roads, read traffic signs, detect other cars, objects and pedestrians. The self-driving car can then steer its way on streets and highways, avoid hitting obstacles, and (hopefully) safely drive its passengers to their destination.

- Computer Vision In Facial Recognition: Computer vision also plays an important role in facial recognition applications, the technology that enables computers to match images of people’s faces to their identities. Computer vision algorithms detect facial features in images and compare them with databases of face profiles. Consumer devices use facial recognition to authenticate the identities of their owners. Social media apps use facial recognition to detect and tag users. Law enforcement agencies also rely on facial recognition technology to identify criminals in video feeds.

- Computer Vision In Augmented Reality & Mixed Reality: Computer vision also plays an important role in augmented and mixed reality, the technology that enables computing devices such as smartphones, tablets and smart glasses to overlay and embed virtual objects on real world imagery. Using computer vision, AR gear detect objects in real world in order to determine the locations on a device’s display to place a virtual object. For instance, computer vision algorithms can help AR applications detect planes such as tabletops, walls and floors, a very important part of establishing depth and dimensions and placing virtual objects in physical world.

- Computer Vision In Healthcare: Computer vision has also been an important part of advances in health-tech. Computer vision algorithms can help automate tasks such as detecting cancerous moles in skin images or finding symptoms in x-ray and MRI scans.

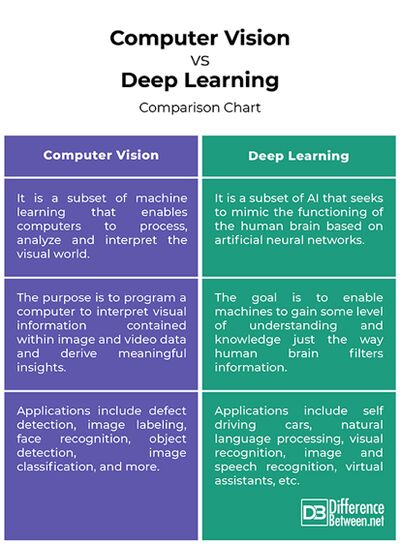

Computer Vision vs. Deep Learning[6]

- Concept: Computer vision is a subset of machine learning that deals with making computers or machines understand human actions, behaviors, and languages similarly to humans. The idea is to get machines to understand and interpret the visual world so that they make sense out of it and derive some meaningful insights. Deep learning is a subset of AI that seeks to mimic the functioning of the human brain based on artificial neural networks.

- Purpose: The purpose of computer vision is to program a computer to interpret visual information contained within image and video data in order to make better sense of the digital data. The idea is to translate this data into meaningful insights, using contextual information provided by humans in order to make better business decisions and solve complex problems. Deep learning has been introduced with the objective of moving machine learning closer to AI. DL algorithms have revolutionized the way we deal with data. The goal is to extract features from raw data based on the notion of artificial neural networks.

- Application: The most common real world applications of computer vision include defect detection, image labeling, face recognition, object detection, image classification, object tracking, movement analysis, cell classification, and more. Top applications of deep learning are self driving cars, natural language processing, visual recognition, image and speech recognition, virtual assistants, chatbots, fraud detection, etc.

Challenges in Implementing Computer Vision[7]

By 2027, the global computer vision market size is projected to reach $19.1 billion. Much of the growth will be fueled by the wider adoption of artificial intelligence solutions for quality control in manufacturing, facial recognition, and biometric scanning systems for the security industries, and the somewhat delayed, yet still imminent arrival of autonomous vehicles. The rapid progress in deep learning algorithms (and Convolutional Neural Network (CNN) in particular) accelerated the scale and speed of implementing computer vision solutions. However, despite an overall positive outlook, there is still a cohort of factors constraining computer vision implementations. Below are the top-5.

- Suboptimal Hardware Implementation: Computer vision applications are a double-pronged setup, featuring both software algorithms and hardware systems (cameras and often IoT sensors). Failure to properly configure the latter leaves you with significant blind spots. Hence, you need to first ensure that you have a camera, capable of capturing high-definition video streams at the required rate per second. Then you need to ensure a proper angle for capturing the object’s position. When you test the setup, make sure that the cameras:

- Cover all areas of surveillance.

- Are properly positioned. The frame captures the object from the correct angle.

- All the necessary configurations are incheck.

- Underestimating the Volume and Costs of Required Computing Resources: The boom in popularity of AI technologies (ML, DL, NLP, and computer vision among others) have largely commoditized access to best-in-class computer vision libraries and deep learning frameworks, distributed as open-source solutions. For skilled data scientists, coming up with an algorithmic solution to computer vision problems is no longer an issue (even if we are talking about advanced computer vision scenarios such as autonomous driving). The modeling know-how is already available. It is computing power that often constrains model scaling and large-scale deployments. When going forward with computer vision technology, many business leaders underestimate:

- Project hardware needs

- Cloud computing costs

As a result, some invest in advanced algorithm development, but subsequently, fail to properly train and test the models because the available hardware doesn’t meet the project technical requirements. To better understand the costs of launching complex machine learning projects, let us take a look at the current state-of-the-art image recognition algorithm. A semi-supervised learning approach Noisy Student (developed by Google) relies on convolutional neural networks (CNN) architecture and over 480M parameters for processing images. Understandably, such operations require immense computing power.

- Short Project Timelines: When estimating the time-to-market for computer vision applications, some leaders overly focus on the model development timelines and forget to factor in the extra time needed for:

- Camera setup, configuration, and calibration

- Data collection, cleansing, and validation

- Model training, testing, and deployment

- Lack of Quality Data: Labeled and annotated datasets are the cornerstone to successful CV model training and deployment. General-purpose public datasets for computer vision are relatively easy to come by. However, companies in some industries (for example, healthcare) often struggle to obtain high-quality visuals for privacy reasons (for example, CT scans or X-ray images). Other times, real-life video and images are hard to come by (for example, footage from road accidents and collisions) in sufficient quantity. Another common constraint is the lack of a mature data management process within organizations which makes it hard to obtain proprietary data from siloed systems to enhance the public datasets and collect extra data for re-training. In essence, businesses face two main issues when it comes to data quality for computer vision projects:

- Insufficient public and proprietary data available for training and re-training

- The obtainable data is of poor quality and/or requires further costly manipulations (e.g., annotation).

- Inadequate Model Architecture Selection: Most companies cannot produce sufficient training data and/or don’t have high MLOps maturity for churning out advanced computer vision models, performing in line with the state of the art benchmarks. Respectively, when it comes to project requirements gathering, the line of business leaders often set overly ambitious targets for the data science teams without assessing the feasibility of reaching such targets. As a result, when it comes down to the later development stages, some leaders may realize that the developed model:

- Does not meet the stated business objectives (due to earlier overlooked requirements in terms of hardware, data quality/volume, computing resources)

- Demands too much computing power, which makes scalability cost-inhibitive.

- Delivers insufficient results in terms of accuracy/performance and cannot be used in business settings.

- Relies on a custom architecture that is too complex (and expensive) to maintain or scale in production.

See Also

- Artificial Intelligence (AI)

- Artificial Neural Network (ANN)

- Machine Learning

- Machine-to-Machine (M2M)

- Human Computer Interaction (HCI)

- Human-Centered Design (HCD)

- Automation

References

- ↑ Definition - What Does Computer Vision Mean? Techopedia

- ↑ History of Computer Vision Wikipedia

- ↑ How computer vision works SAS

- ↑ Computer vision examples IBM

- ↑ Applications Of Computer Vision Towards data science

- ↑ Computer Vision vs. Deep Learning: Comparison Chart Difference Between

- ↑ 5 Pitfalls of Implementing Computer Vision Infopulse