Cognitive Computing

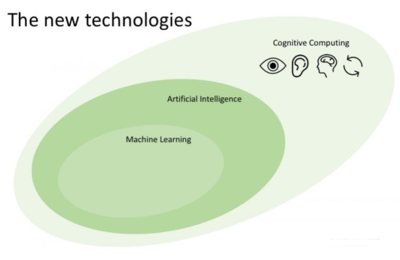

Cognitive Computing (CC) is the simulation of human thought processes in a computer model. It is a technology platform based on the scientific disciplines of artificial intelligence]] and signal processing. In summary, a cognitive computer plows through un/structured data to find hidden knowledge and present data in an actionable form. It is worth noting that CC is an iterative process, with humans verifying or discarding any new discoveries. This allows the system to ‘learn’ over time and better identify patterns.[1]

Evolution of Cognitive Computing[2]

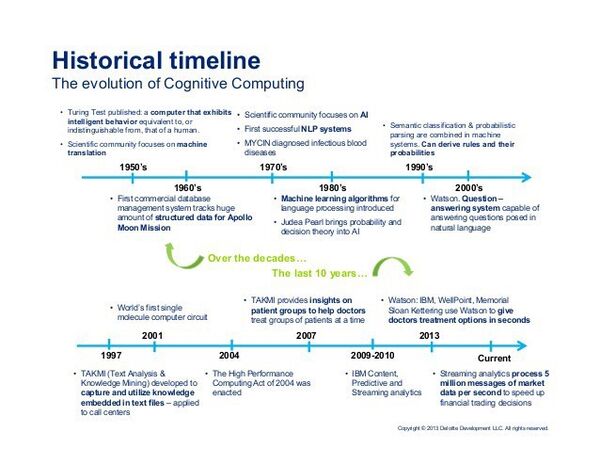

Cognitive Computing actually got its start roughly sixty years ago, by the time when Alan Turing published its now famous Test (back then, a validation of intelligent behavior by a computing machine), and Claude Shannon published a detailed analysis of chess playing as a search. AI was just getting started — MIT´s John McCarthy (the inventor of LISP) was the first to use the name “artificial intelligence” during the second Dartmouth Conference.

During the 50s, the first “smart” programs were developed, such as Samuel´s checkers player and Newell/Shaw/Simon´s “logic theorist”. McCarthy, together with Marvin Minsky, later came to found the MIT AI lab. It was around this time that fascination with machine translation began when Masterman and colleagues at the University of Cambridge designed basic semantic nets for machine translation (R.Quillian later effectively demonstrated semantic nets in 1966 at the Carnegie Institute).

The early 60s saw Ray Solomonoff lay the foundations of a mathematical theory of AI; he introduced the universal Bayesian methods for inductive inference and prediction. By 1963, Feigenbaum and Feldman were publishing the first set of AI articles, in the now famous “Computers and Thought” journal. A student named Danny Bobrow at MIT showed that computers could be programmed to understand natural language to grasp natural language well enough to solve algebra word problems correctly. Before the advent of the first generation of database systems capable of tracking a substantial amount of (structured) data to support the NASA missions to the Moon (project Apolo), J.Weizenbaum put together the famous Eliza. This interactive computer program could carry on a dialogue (in the English language) on any topic. Worth noting that around 1966, a series of negative reports on machine translation would do much to kill work in NLP for several years due to the complexity and lack of maturity in the techniques available at the time. Before the 60s were over, J. Moses demonstrated the power of symbolic reasoning, and the first good chess-playing programs (such as MacHack) came to be. It was also in 1968 that a program called Snob (created by Wallace and Boulton) affected clustering work (unsupervised classification, in machine learning parlance) using the Bayesian minimum message length criterion (the first mathematical realization of Occam razor principle!)

The 70s started with Minsky and Papert's “Perceptrons,” recognizing the limits of basic neural network structures. However, at around the same time, Robinson and Walker established an influential NLP group at SRI Think Tank, sparking significant (productive) research in this arena. Colmerauer developed Prolog in 1972, and only two years after T.Shortliffe´s Ph.D. dissertation on the MYCIN program demonstrated a practical rule-based approach to medical diagnoses (even under uncertainty), influencing expert systems development for years to come. In 1975, Minsky published his widely read and influential article on Frames as a representation of knowledge — where several ideas about schemas and semantic links were brought together.

The 80s saw the first machine learning algorithms for language processing introduced. UCLA´s Judea Pearl is credited for bringing probability and decision theory into AI by this time. Temporal events were formalized through Interval Calculus, pioneered by J. Allen in 1983. By the mid-80s, neural networks became widely used with backpropagation algorithms.

Early evidence of successful reinforcement learning (very important in contemporary machine learning systems) dates back to the early 90s — Gary Tesauro´s backgammon computer program demonstrated that reinforcement learning was powerful enough to enable the creation of competitive gammon software. In general, the 90s witnessed relevant advances in all areas of AI, with significant demonstrations in machine learning, intelligent tutoring, case-based reasoning, multi-agent planning, scheduling, uncertain reasoning, data mining, natural language understanding and translation, and to some degree, machine vision. One highlight was semantic classification and probabilistic parsing being combined to enable the derivation of rules and their probabilities. Also of note is the advent of TAKMI, a tool to capture and use knowledge embedded in text files (applied to contact centers).

The 2000s are the moment in time when QA (question/answer) systems came to prominence. Watson itself is the result of sophisticated work conducted by IBM to further develop and enhance Piquant (Practical Intelligent Question Answering Technology) adapting for the Jeopardy challenge. After the mid-2000, we’ve been watching substantial progress in a broad set of fields of AI, taking the advances from the 90s way further and giving birth to disruptive innovations — things such as the first program to effectively solve checkers (2007), the self-driving car (2009), computer-generated articles (2010), smartphone-based assistants (2010/11) and a plethora of advances in robotics.

In 2011 Watson famously defeated the two best human contenders of Jeopardy! Game tv show (Jennings and Rutter), kickstarting the Cognitive Computing era. Over recent years we are also witnessing significant developments in deep learning techniques, which grant computing systems with the ability to recognize images and video with a high degree of precision.

What Cognitive Computing Does[3]

For example, according to a TED Talk from IBM, Watson could eventually be applied in a healthcare setting to help collate the span of knowledge around a condition, including patient history, journal articles, best practices, diagnostic tools, etc., analyze that vast quantity of information, and provide a recommendation. The doctor is then able to look at evidence-based treatment options based on a large number of factors, including the individual patient’s presentation and history, to hopefully make better treatment decisions.

In other words, the goal (at this point) is not to replace the doctor but expand the doctor’s capabilities by processing the humongous amount of data available that no human could reasonably process and retain and provide a summary and potential application. This sort of process could be done for any field where large quantities of complex data need to be processed and analyzed to solve problems, including finance, law, and education. These systems will also be applied in other business areas, including consumer behavior analysis, personal shopping bots, customer support bots, travel agents, tutors, security, and diagnostics. Hilton Hotels recently debuted the first concierge robot, Connie, which can answer questions about the hotel, local attractions, and restaurants posed to it in natural language. The personal digital assistants we have on our phones and computers now (Siri and Google, among others) are not true cognitive systems; they have a pre-programmed set of responses and can only respond to a preset number of requests. But the time is coming in the near future when we will be able to address our phones, our computers, our cars, or our smart houses and get a real, thoughtful response rather than a pre-programmed one.

As computers become more able to think like humans, they will expand our capabilities and knowledge. Just as the heroes of science fiction movies rely on their computers to make accurate predictions, gather data, and draw conclusions, we will move into an era when computers can augment human knowledge and ingenuity in entirely new ways.

Features of Cognitive Computing[4]

A cognitive computing technology platform uses machine learning and pattern recognition to adapt and make sense of information, even unstructured information like natural speech. To achieve those capabilities, cognitive computing typically provides the following attributes, which were developed by the Cognitive Computing Consortium in 2014:

- Adaptive Learning: Cognitive systems need to accommodate an influx of rapidly changing information and data that helps to fulfill an evolving set of goals. The platforms process dynamic data in real time and adjust to satisfy the surrounding environment and data needs.

- Interactive: Human-computer interaction (HCI) is an essential part of cognitive machines. Users interact with cognitive systems and set parameters, even as those parameters change. The technology interacts with other devices, processors, and cloud platforms.

- Iterative and Stateful: Cognitive computing systems identify problems by posing questions or pulling in supplementary data if a fixed query is incomplete or vague. Technology makes this possible by storing information about related situations and potential scenarios.

- Contextual: Cognitive computing systems must identify, understand, and my contextual data, such as time, syntax, domain, location, requirements, or a specific user’s tasks, profile, or goals. They may draw on multiple sources of information, including auditory, visual, or sensor data and structured or unstructured data.

How Cognitive Computing Works[5]

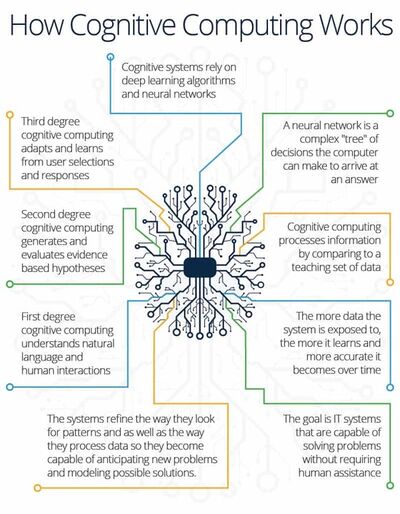

So, how does cognitive computing work in the real world? Cognitive computing systems may rely on deep learning and neural networks. Deep learning is a specific type of machine learning based on an architecture called a deep neural network.

The neural network, inspired by the architecture of neurons in the human brain, comprises systems of nodes — sometimes termed neurons — with weighted interconnections. A deep neural network includes multiple layers of neurons. Learning occurs as a process of updating the weights between these interconnections. One way of thinking about a neural network is to imagine it as a complex decision tree that the computer follows to arrive at an answer.

In deep learning, the learning takes place through a process called training. Training data is passed through the neural network, and the output generated by the network is compared to the correct output prepared by a human being. If the outputs match (rare at the beginning), the machine has done its job. If not, the neural network readjusts the weighting of its neural interconnections and tries again. As the neural network processes more and more training data — in the region of thousands of cases, if not more — it learns to generate output that more closely matches the human-generated output. The machine has now “learned” to perform a human task.

Preparing training data is an arduous process, but the big advantage of a trained neural network is that once it has learned to generate reliable outputs, it can tackle future cases at a greater speed than humans ever could, and its learning continues. The training is an investment that pays off over time, and machine learning researchers have come up with interesting ways to simplify the preparation of training data, such as by crowdsourcing it through services like Amazon Mechanical Turk.

When combined with NLP capabilities, IBM’s Watson cognitive computing combines three “transformational technologies.” The first is the ability to understand natural language and human communication; the second is the ability to generate and evaluate evidence-based hypotheses; and the third is the ability to adapt and learn from its human users.

While work is aimed at computers that can solve human problems, the goal is not to push human beings’ cognitive abilities out of the picture by having computing systems replace people outright but to supplement and extend them.

Applications of Cognitive Computing[6]

- Education: Even if Cognitive Computing can not take the place of teachers, it can still be a heavy driving force in the education of students. Cognitive Computing being used in the classroom is applied by essentially having an assistant that is personalized for each individual student. This cognitive assistant can relieve the stress that teachers face while teaching students while also enhancing the student’s learning experience overall. Teachers may not be able to pay each and every student individual attention; this is the place where cognitive computers fill the gap. Some students may need a little more help with a particular subject. For many students, Human interaction between student and teacher can cause anxiety and discomfort. With the help of Cognitive Computer tutors, students will not have to face their uneasiness and can gain the confidence to learn and do well in the classroom. While a student is in class with their personalized assistant, this assistant can develop various techniques, like creating lesson plans, to tailor and aid the student and their needs.

- Healthcare: Numerous tech companies are in the process of developing technology that involves Cognitive Computing that can be used in the medical field. The ability to classify and identify is one of the main goals of these cognitive devices. This trait can be very helpful in the study of identifying carcinogens. This cognitive system that can detect would be able to assist the examiner in interpreting countless numbers of documents in a lesser amount of time than if they did not use Cognitive Computer technology. This technology can also evaluate information about the patient, looking through every medical record in-depth and searching for indications that can be the source of their problems.

- Industry work: Cognitive Computing, in conjunction with big data and algorithms that comprehend customer needs, can be a major advantage in economic decision-making. The powers of Cognitive Computing and AI hold the potential to affect almost every task that humans are capable of performing. This can negatively affect human employment, as there would be no such need for human labor anymore. It would also increase wealth inequality; the people at the head of the Cognitive Computing industry would grow significantly richer, while workers without ongoing, reliable employment would become less well off. The more industries start to utilize Cognitive Computing, the more difficult it will be for humans to compete. Increased use of technology will also increase the amount of work that AI-driven robots and machines can perform. Only extraordinarily talented, capable, and motivated humans would be able to keep up with the machines. The influence of competitive individuals in conjunction with AI/CC has the potential to change the course of humankind.

Cognitive Computing Vs. AI[7]

Artificial intelligence and cognitive computing work from the same building blocks, but cognitive computing adds a human element to the results. AI is a number cruncher concerned with receiving large amounts of data, learning from the data, finding patterns in that data, and delivering solutions from that data. Cognitive computing, on the other hand, takes those outputs and examines their nuances.

For example, Google Maps and Fitbit use big data, machine learning, and predictive analytics in their problem-solving methods. Siri, Alexa, Watson, and company-specific chatbots also do, but they can receive and deliver information naturally, leveraging both structured and unstructured data. These products are AI technologies embodied with human-like names and personalities.

Advantages of Cognitive Computing[8]

In the process automation field, the modern computing system is set to revolutionize the current and legacy systems. According to Gartner, cognitive computing will disrupt the digital sphere unlike any other technology introduced in the last 20 years. By having the ability to analyze and process large amounts of volumetric data, cognitive computing helps in employing a computing system for relevant real-life systems. Cognitive computing has a host of benefits, including the following:

- Accurate Data Analysis: Cognitive systems are highly-efficient in collecting, juxtaposing, and cross-referencing information to analyze a situation effectively. Let's take the case of the healthcare industry. Cognitive systems such as IBM Watson helps physicians to collect and analyze data from various sources such as previous medical reports, medical journals, diagnostic tools & past data from the medical fraternity, thereby assisting physicians in providing a data-backed treatment recommendation that benefits both the patient as well as the doctor. Instead of replacing doctors, cognitive computing employs robotic process automation to speed up data analysis.

- Leaner & More Efficient Business Processes: Cognitive computing can analyze emerging patterns, spot business opportunities, and handle critical process-centric issues in real-time. By examining a vast amount of data, a cognitive computing system such as Watson can simplify processes, reduce risk, and pivot according to changing circumstances. While this prepares businesses in building a proper response to uncontrollable factors, at the same time, it helps to create lean business processes.

- Improved Customer Interaction: The technology can be used to enhance customer interactions by implementing robotic process automation. Robots can provide contextual information to customers without needing to interact with other staff members. As cognitive computing makes it possible to provide only relevant, contextual, and valuable information to the customers, it improves customer experience, thus making customers satisfied and much more engaged with a business.

See Also

- Cognitive Bias

- Cognitive Dimensions of Notations

- Cognitive Dissonance

- Cognitive Security

- Cognitive Walkthrough Method

- Artificial General Intelligence (AGI)

- Artificial Intelligence (AI)

- Artificial Neural Network (ANN)

- Machine-to-Machine (M2M)

- Machine Learning

- Human-Centered Design (HCD)

- Human Computer Interaction (HCI)

- Robotic Process Automation (RPA)

- Automatic Content Recognition (ACR)

- Autonomous System (AS)

- Quantum Computing

- Distributed Computing

- Computational Finance

- Computational Logic

- Information Technology (IT)

References

- ↑ Definition - What Does Cognitive Computing mean?

- ↑ History of Cognitive Computing

- ↑ What can cognitive computing do?

- ↑ Features of cognitive computing

- ↑ How Does Cognitive Computing Work?

- ↑ Applications of Cognitive Computing

- ↑ Intelligent The Distinction Between Cognitive Computing and AI

- ↑ Advantages of Cognitive Computing